A GDPR compliance checklist is a comprehensive set of guidelines and requirements that organizations need to follow to ensure compliance with the General Data Protection Regulation (GDPR), including measures such as obtaining valid consent, implementing robust data protection measures, conducting privacy impact assessments, facilitating individual rights, establishing cross-border data transfer mechanisms, appointing a Data Protection Officer (DPO), conducting regular audits and assessments, and maintaining detailed records to demonstrate adherence to GDPR regulations.

| GDPR Compliance Checklist | Description |

|---|---|

| Understand the scope and applicability of the GDPR to your organization | Determine if your organization processes personal data of individuals in the EU and assess whether you fall under the GDPR’s jurisdiction. |

| Designate a Data Protection Officer (DPO) if required | Appoint a DPO if your organization meets the criteria outlined in the GDPR, such as being a public authority or engaging in large-scale processing of personal data. |

| Develop and implement data protection policies and procedures | Create internal policies and procedures that outline how your organization will handle personal data, ensuring compliance with GDPR principles and requirements. |

| Obtain lawful and valid consent for data processing activities | Ensure that you have obtained explicit and informed consent from individuals before processing their personal data, and document the consent process to demonstrate compliance. |

| Review and update privacy notices and policies to comply with GDPR requirements | Revise your privacy notices and policies to provide clear and transparent information to individuals about how their personal data is processed, including purposes, legal basis, retention periods, and rights. |

| Implement appropriate security measures to protect personal data | Establish technical and organizational safeguards to protect personal data against unauthorized access, loss, or destruction, taking into account the risks associated with data processing. |

| Conduct data protection impact assessments (DPIAs) for high-risk processing activities | Identify and assess potential risks to individuals’ privacy rights and freedoms, and conduct DPIAs for processing activities that are likely to result in high risks. |

| Establish procedures to handle data subject rights | Put in place processes and mechanisms to handle individuals’ rights, including access, rectification, erasure, restriction of processing, data portability, and objection. |

| Establish mechanisms for securely transferring personal data outside the EEA | Implement appropriate safeguards, such as standard contractual clauses or binding corporate rules, when transferring personal data to countries outside the EEA. |

| Implement procedures to handle data breaches | Establish an incident response plan to detect, assess, and respond to data breaches, and notify the relevant supervisory authority and affected individuals within the required timeframe, if necessary. |

| Assess and review data processing agreements with third-party vendors and processors | Review and update contracts with third-party vendors or processors to ensure they meet GDPR requirements and address data protection obligations. |

| Train employees on data protection obligations and best practices | Provide regular training to employees regarding their responsibilities, data protection principles, and best practices for handling personal data in compliance with the GDPR. |

| Document data processing activities | Maintain a record of your organization’s data processing activities, including purposes, categories of personal data, recipients, retention periods, and any cross-border transfers. |

| Establish procedures for data retention and deletion | Implement policies and procedures to determine appropriate retention periods for different categories of personal data and ensure timely deletion or anonymization when data is no longer needed. |

| Develop a process for handling data subject requests and inquiries | Establish a system for receiving, responding to, and resolving data subject requests, such as access, rectification, erasure, or objection, in a timely and compliant manner. |

| Regularly review and update privacy and security measures | Continuously monitor, assess, and improve your organization’s privacy and security measures to adapt to changing risks, technologies, and legal requirements. |

| Conduct internal audits to assess compliance | Regularly conduct internal audits and reviews of your organization’s data protection practices to identify any non-compliance issues and address them promptly. |

| Establish procedures for monitoring and reporting data protection compliance | Implement mechanisms to monitor and document compliance efforts, such as privacy impact assessments, records of processing activities, and incident reporting. |

| Maintain documentation and records | Keep comprehensive documentation and records of your organization’s GDPR compliance efforts, including policies, procedures, training records, and any data protection impact assessments conducted. |

| Stay informed about GDPR updates and guidance | Stay up to date with changes to GDPR regulations and guidance provided by relevant supervisory authorities to ensure ongoing compliance with evolving requirements and best practices. |

GDPR Compliance Preparation Checklist

The General Data Protection Regulation has been a reality since it was first agreed upon, in 2016. But, according to Spice works, only 2% of IT professionals surveyed within the European Union (EU) felt that their company was fully prepared for GDPR, just twelve months before the implementation date of 25 May 2018. The same figure applied to professionals within the US, and was only slightly higher, at 5%, for professionals within the UK. This is a worrying statistic, given that compliance is necessary for companies to avoid problems with significant fines and other sanctions.

So, what do companies need to do, to ensure that they are compliant with the GDPR? Here is a useful checklist of preparations that need to be completed.

| GDPR Compliance Preparation Checklist | Description |

|---|---|

| Awareness and Understanding | Educate key stakeholders and staff about the GDPR’s requirements, principles, and implications for the organization. |

| Data Mapping | Conduct a comprehensive audit and documentation of personal data processing activities, including data flows, sources, and purposes. |

| Legal Basis for Processing | Review and identify the appropriate legal basis for processing personal data, such as consent, contractual necessity, legal obligation, vital interests, legitimate interests, or public task. |

| Data Subject Rights | Develop procedures and mechanisms to handle individuals’ rights, including the right to access, rectify, erasure, restrict processing, data portability, and object to processing. |

| Privacy Notices and Policies | Update privacy notices and policies to align with GDPR requirements, ensuring clear and transparent communication about data processing activities, legal basis, retention periods, and individuals’ rights. |

| Consent Mechanisms | Establish clear procedures for obtaining valid consent, ensuring it is freely given, specific, informed, and unambiguous, and providing individuals with the ability to withdraw consent easily. |

| Data Protection Impact Assessments (DPIAs) | Conduct DPIAs for high-risk processing activities, identifying and mitigating privacy risks and ensuring data protection measures are implemented. |

| Security Measures | Implement appropriate technical and organizational security measures to protect personal data from unauthorized access, loss, or damage, considering encryption, pseudonymization, access controls, and regular security testing. |

| Data Breach Response | Develop an incident response plan to detect, assess, and report data breaches promptly to the relevant supervisory authority and affected individuals, if necessary. |

| Vendor Management | Assess and update contracts with third-party vendors and processors to ensure they meet GDPR requirements, including data protection obligations and appropriate safeguards. |

| Employee Training | Provide comprehensive training to staff on data protection principles, responsibilities, and secure handling of personal data. |

| Data Transfer Mechanisms | Ensure that any transfer of personal data outside the EEA is carried out in compliance with GDPR provisions, using appropriate safeguards such as standard contractual clauses or binding corporate rules. |

| Data Retention and Disposal | Establish policies and procedures for data retention, specifying retention periods and secure disposal methods in line with legal requirements and data minimization principles. |

| Data Protection Officer (DPO) | Appoint a DPO if required by the GDPR, ensuring they have the necessary expertise and independence to oversee data protection efforts. |

| Documentation and Record-keeping | Maintain detailed records of data processing activities, data subject requests, consent, DPIAs, security measures, and any other documentation to demonstrate GDPR compliance. |

| Regular Auditing and Review | Conduct periodic audits and assessments of data protection practices to identify gaps, ensure ongoing compliance, and continuously improve data protection measures. |

GDPR Basics

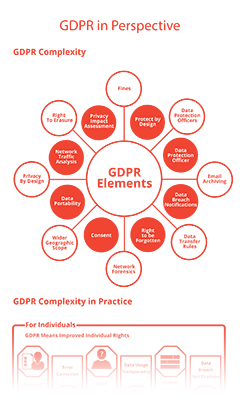

GDPR compliance is crucial for organizations that handle personal data of individuals in the European Union (EU). GDPR establishes a comprehensive framework for the protection of personal data, aiming to give individuals greater control over their information while imposing strict obligations on organizations. Compliance with the GDPR involves understanding and implementing its key principles, adopting appropriate data protection measures, and respecting individuals’ rights. To achieve GDPR compliance, organizations must adhere to fundamental principles such as lawfulness, fairness, and transparency in data processing. This requires obtaining a legal basis for processing personal data, being transparent about data processing activities, and providing individuals with clear and accessible privacy notices. Organizations must also ensure data minimization by collecting and processing only necessary data, and implement storage limitations by retaining data for only as long as necessary. Moreover, organizations must prioritize data accuracy, integrity, and confidentiality, implementing robust security measures to protect personal data from unauthorized access, loss, or damage.

Another important aspect of GDPR compliance is respecting individuals’ rights. The GDPR grants individuals several rights, including the right to access their data, the right to rectify inaccuracies, the right to erasure (commonly known as the “right to be forgotten”), the right to restrict processing, the right to data portability, and the right to object to processing in certain circumstances. Organizations must establish processes to handle these requests effectively and promptly. They should also ensure that individuals have given explicit and informed consent for the processing of their personal data, and offer a clear mechanism for withdrawing consent if desired. Non-compliance with the GDPR can result in severe consequences, including significant fines and reputational damage. Organizations should, therefore, prioritize GDPR compliance efforts by conducting regular data protection assessments, implementing appropriate technical and organizational measures, appointing a Data Protection Officer (DPO) when necessary, providing staff training on data protection obligations, and maintaining comprehensive documentation to demonstrate compliance. By embracing GDPR compliance as a core principle, organizations can not only protect individuals’ privacy rights but also foster trust and confidence among their customers and stakeholders.

Some Essential GDPR Definitions

A “Controller” under GDPR is the organisation or company which determines the purposes of the processing of personal data where a “processor” carries out the processing of the personal data on behalf of the “Controller”. A “processor” can further engage “sub-processors” and the “Controller” would have visibility and approval rights over these “sub-processors”.

The GDPR does not refer to data subjects or clients the language that is used most consistently throughout the GDPR is “natural person” or “data subject” and ‘personal data’ means any information relating to an identified or identifiable natural person (‘data subject’). For the purpose of this article data subjects or end clients or customers will be referred to as “data subjects”

‘processing’ means any operation or set of operations which is performed on personal data or on sets of personal data, whether or not by automated means, such as collection, recording, organisation, structuring, storage, adaptation or alteration, retrieval, consultation, use, disclosure by transmission, dissemination or otherwise making available, alignment or combination, restriction, erasure or destruction;

Article 4 of GDPR contains a full list of definitions.

Carry out an audit of data held

Once a company knows what is required to comply with the GDPR, a key starting point would be to carry out an audit of the personal data it’s currently processing and document it in line with Article 30 of GDPR. The company or organisation needs to consider areas such as:

- What personal data is being processed? What categories of personal data are being processed? Is there any special category data which requires extra safeguards?

- What is the legal basis for processing the personal data?

- Where the personal data is being processed and whether it is sent outside the EU for processing?

- Where the personal data is processed outside the EU, an appropriate transfer mechanism is required.

- Who is responsible for processing the personal data? Are contracts in place with data processors?

- What is the personal data being used for? is it shared with external parties?

- Is it necessary to still be retaining the personal data? For how long?

One of the most important things above to consider is whether it’s necessary to still be retaining the personal data. The GDPR stipulates that data should only be used for the purpose for which it was originally obtained. If this purpose no longer exists, the data should be destroyed or returned to the data subject, unless there is a legally valid obligation for retaining it. It’s also important to note that the less personal data a company holds, the less the impact of any data security issue is likely to be. The company should have a retention policy which sets out how long each type of data is retained and then adhere to that policy.

Identify GDPR risks

Companies need to identify any high-risk data processing that may pose risks to the rights and freedoms of data subjects. Data Protection Impact Assessments (DPIAs) should be used for this purpose where applicable. Once it has identified risks, the company needs to mitigate against them. If it seems as though mitigation is not possible, a discussion should be had with the relevant Data Protection Authority (DPA), regarding the processing of the personal data. It’s expected that this type of discussion should be the exception to the rule, but if no mitigation is possible, for a high-risk situation, a company must have the discussion, in order to comply with the GDPR. The European Data protection board has issued guidelines on when DPIA’s are necessary.

| GDPR Risks | Description |

|---|---|

| Data Breaches | Unauthorized access, accidental loss, or theft of personal data, potentially leading to harm for individuals and reputational damage for the organization. |

| Insufficient Security Measures | Inadequate technical and organizational safeguards to protect personal data against unauthorized access, such as weak passwords, lack of encryption, or outdated security protocols. |

| Non-Compliant Data Transfers | Transferring personal data to countries outside the EEA without implementing appropriate safeguards, such as standard contractual clauses or binding corporate rules. |

| Lack of Consent or Lawful Basis | Processing personal data without obtaining valid consent or establishing a lawful basis as required by the GDPR. |

| Incomplete Data Subject Rights Processes | Inability to effectively handle individuals’ rights, including access requests, rectification, erasure, or objection, within specified timeframes. |

| Inadequate Vendor Management | Failure to assess the data protection practices of third-party vendors or processors, resulting in non-compliant data sharing or inadequate protection of personal data. |

| Lack of Awareness and Training | Insufficient training and awareness programs for employees regarding GDPR requirements, potentially leading to mishandling of personal data. |

| Inaccurate or Incomplete Privacy Notices | Failure to provide individuals with transparent and comprehensive information about data processing activities, purposes, legal basis, retention periods, and rights, as required by the GDPR. |

| Inadequate Data Protection Impact Assessments | Failure to conduct DPIAs for high-risk processing activities, resulting in insufficient identification and mitigation of potential privacy risks. |

| Failure to Appoint a Data Protection Officer | Not designating a DPO when required by the GDPR, resulting in a lack of expert oversight and guidance on data protection matters. |

| Inadequate Data Retention and Disposal Practices | Retaining personal data for longer than necessary or failing to securely dispose of it, leading to potential privacy risks and non-compliance with GDPR principles. |

| Lack of Incident Response Plan | Insufficient planning and procedures to detect, assess, and respond to data breaches promptly, potentially leading to delayed or inadequate incident response. |

Ensure that policies and procedures are in place

In order to comply with the GDPR, companies need to have policies and procedures in place and these need to be documented. Key policies and procedures are:

- A Data Protection Policy which sets out how the company or organisation processes personal data

- An Employee notice which sets out how the company or organisation processes personal data of employees.

- A data breach policy which sets out how the company or organisation manages a data breach.

- A data retention policy which details how long the company or organisation will retain different types of personal data.

- A Training guide for staff around data protection best practices

- Technical and Organisational measures to keep personal data safe and secure must be detailed.

- A policy and procedure related to data subject rights and how to respond to them.

- A contracts workstream where the company or organisation is a Data Controller and is required to sign contracts with its data processors.

- A data inventory as detailed in Article 30 of GDPR.

Accountability is a key component of the GDPR, and companies and organisations need to be able to demonstrate compliance hence the data inventory as detailed in Article 30 of GDPR.

Plan for data breaches

Once GDPR is introduced, it will be mandatory for all data breaches, that may impact the rights and freedoms of data subjects, to be reported within seventy-two hours of becoming aware of the breach. Therefore, it’s important for every company to have procedures in place for dealing with a data breach. The GDPR is not detailed or prescriptive on the exact technical measures a company or organisation should have in place, but states as a core principle appropriate technical and organisational measures must be in place to prevent any unlawful processing, accidental loss or data destruction or damage. It’s therefore mandatory to keep information secure, but also to have plans in place regarding what to do if the security is breached. Failure to comply with the GDPR, regarding data breaches, may lead to a costly fine. It could also lead to a damaged reputation, which could be even more costly in the long term, due to potential loss of custom and resulting falls in revenue.

Engage the services of a Data Protection Officer (DPO)

When the GDPR becomes a reality, any company or organisation that controls or processes personal data on a large scale must consider engaging the services of a DPO, either internally, or via an external provider. The same also applies if companies process large amounts of special category data, such as race, political or religious affiliation, trade union membership, sexual preferences, health information, and genetic or biometric personal data. Public bodies that process personal data must also have a DPO in place.

There is likelihood that there will be a shortage of qualified DPOs available. However, there is no stipulation concerning what qualifications a DPO must hold. They do however; need to be fully versed in what is covered by the GDPR, and how it affects the business. They also need to be able to create and manage data protection systems and processes. It’s possible for a company to move someone internally to become a DPO for the company, but they must have the awareness required, and comprehensive training in all aspects of the GDPR and no conflict of interest if holding another role within the company or organisation.

Monitoring and reporting

Once a company has systems in place that will enable it to comply with the GDPR, it also needs to develop monitoring and performance processes. These processes need to exist for two reasons. Every company needs to be able to check that its processes are working, and that it’s fully GDPR compliant, at all times. And, every company needs to be able to prove that it’s compliant, should it be audited by the relevant Data Protection Commission. Companies can only prove that they are compliant if everything they do, with regards to data management and protection, is documented, and if they can prove that a system of controls is in place.

| GDPR Monitoring and Reporting | Description |

|---|---|

| Monitoring | Regularly monitor data processing activities, privacy controls, and security measures to identify potential breaches or vulnerabilities, utilizing tools such as monitoring systems, internal audits, and data protection impact assessments (DPIAs). |

| Incident Reporting | Establish processes for promptly detecting, assessing, and responding to data breaches, including notifying the relevant supervisory authority within the required timeframe, and if necessary, informing affected individuals about the breach and its potential impact on their personal data. |

| Compliance Assessments | Conduct periodic assessments and reviews to evaluate the effectiveness of data protection measures, identify areas of non-compliance, and implement corrective actions through internal assessments, external audits, or engaging third-party experts for impartial evaluations. |

| Record-Keeping | Maintain comprehensive documentation and records of data processing activities, data subject requests, consent records, privacy impact assessments (PIAs), and other relevant information necessary to demonstrate compliance with GDPR requirements. |

| Reporting to Supervisory Authorities | Cooperate with supervisory authorities, respond to requests for information, provide access to documentation, and facilitate investigations or audits conducted by supervisory authorities as required. |

| Internal Reporting | Establish internal reporting channels for employees to report potential data protection issues or breaches, fostering a culture of transparency and accountability within the organization, encouraging employees to contribute to maintaining GDPR compliance. |

GDPR Compliance Checklist

- Ensure that contracts with data processing providers reflect the respective GDPR responsibilities.

- Audit any personal data that the company processes to ensure that it’s accurate, up to date and that the company still has a legal basis to retain it. Complete a data inventory and ensure that your data inventory is compliant with the GDPR Article 30 requirement.

- Ensure the company has a fit for purpose retention policy and that the company is respecting it and that it respects the statutory law on insurance industry data retention.

- Ensure that the company has the appropriate Technical and Organisational measures in place to keep personal data safe and secure.

- Ensure the company is set up and trained as an organisation to deal with any data subject access requests or other requests from data subjects around their personal data. The GDPR introduces new data subject rights around data portability and data erasure. Personal data erasure is not an absolute right, and should the company have a legal requirement to retain the data it would be retained. The new data portability right means that a company or organisation may have to transfer a client’s data in a machine-readable format to a rival company or organisation.

- Ensure the company is set up and trained to deal with any data breaches and reporting of such to the data protection authorities.

- General training and awareness around data protection is critical for organisations as incorrect data disclosure is the greatest reason for data protection breaches.

- Ensure that the company’s privacy policy is updated and communicated to data subjects.

- Ensure the company is legally entitled to process personal data, that it has an applicable legal basis be that contract, legal obligation or where consent based that any required consent is in place. Where processing is based on consent ensure that there are proper records of that consent. There are 6 legal bases for processing personal data set out in Article 6 of GDPR and extra ones for special category data set out in Article 9 of GDPR.

- Demonstrating compliance is a key area under the accountability requirement of GDPR organisations must be able to demonstrate compliance with the regulation by means of a paper trail. Ensure there is ongoing monitoring of compliance.

- Consider appointing a DPO (Data Protection officer), the European Data protection working group WP29 has issued guidance on sectors which should consider appointing a DPO.

- Data minimisation and privacy by default and design must be a core principle of any data processing. This means collecting only the personal data required for the purpose and having data protection as a key lifecycle component of any processing in the company or organisation.

Benefits of GDPR Compliance Checklist

A HIPAA compliance checklist is a valuable tool that provides a comprehensive and systematic approach to ensure adherence to the HIPAA regulations, covering key areas such as administrative, physical, and technical safeguards, privacy practices, breach notifications, and employee training. A GDPR compliance checklist should be used to ensure compliance because it provides a structured framework that helps organizations navigate the complexities of the General Data Protection Regulation, ensuring that all necessary requirements are addressed, potential risks are mitigated, and data protection practices are consistent and well-documented, reducing the risk of non-compliance, penalties, and reputational damage while demonstrating a proactive commitment to protecting individuals’ personal data. See the table below for a list of benefits.

| Benefits of a GDPR Compliance Checklist | Description |

|---|---|

| Comprehensive Guidance | Provides a structured and comprehensive guide to address all necessary GDPR requirements, reducing the risk of overlooking obligations. |

| Efficiency | Streamlines compliance efforts by breaking down complex regulations into actionable steps, making the process more efficient and manageable. |

| Compliance Assurance | Serves as a documented record of completed tasks, demonstrating a proactive approach to data protection and ensuring compliance. |

| Risk Mitigation | Helps identify potential gaps or vulnerabilities in data protection practices, allowing organizations to proactively address issues and reduce risk. |

| Consistency | Promotes consistent data protection practices across the organization, ensuring that all relevant areas are addressed uniformly. |

| Documentation | Provides a clear record of compliance efforts, demonstrating due diligence and serving as evidence in audits or legal proceedings. |

| Employee Awareness and Training | Facilitates education and training programs, raising employee awareness of responsibilities and the importance of GDPR compliance. |

| Continuous Improvement | Serves as a reference for regular reviews and assessments, identifying areas for improvement and enhancing data protection practices. |

| Flexibility and Customization | Allows customization based on specific organizational needs and circumstances, adapting the checklist to industry, size, and complexity. |

| Adaptability to Changes | Provides a framework for adapting to evolving compliance requirements, ensuring ongoing compliance with updated guidelines and best practices. |